Summary

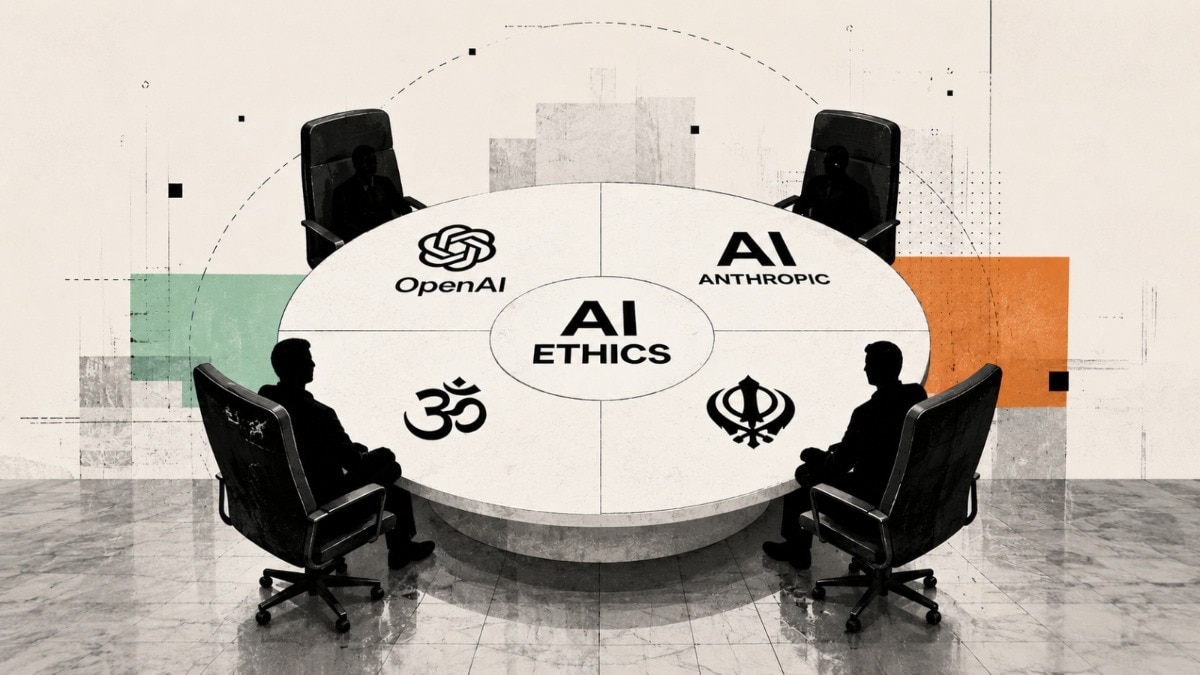

AI can do a lot of things by itself. It can write code, or do complex calculations. But what AI still cant do is understand what is right or wrong. And for that, the companies that make AI, such as Anthropic or OpenAI, are tasked to train their models in such a manner that they understand moral val…

Source: India Today

AI News Q&A (Free Content)

Q1: What was the primary objective of the 'Faith-AI Covenant' roundtable organized by Anthropic and OpenAI?

A1: The primary objective of the 'Faith-AI Covenant' roundtable, organized by Anthropic and OpenAI, was to discuss how AI can be developed in a way that reflects human values and moral responsibility. This meeting brought together representatives from various religious groups, including the Hindu and Sikh communities, to explore ethical AI development beyond just regulatory measures.

Q2: How does Anthropic's 'Claude' language model incorporate ethical considerations?

A2: Anthropic's 'Claude' language model incorporates ethical considerations through a technique known as 'constitutional AI'. This approach aims to improve the model's ethical and legal compliance, aligning it with moral values. Developed by Anthropic, Claude is a series of large language models used for various applications, including software development, with an emphasis on ethical AI alignment.

Q3: What has been OpenAI's contribution to generative AI, and how has it influenced the industry?

A3: OpenAI has significantly contributed to generative AI through the development of the GPT family of large language models, the DALL-E series of text-to-image models, and the Sora series of text-to-video models. These innovations have influenced industry research and commercial applications, catalyzing widespread interest in generative AI, particularly following the release of ChatGPT in November 2022.

Q4: How are Anthropic and OpenAI addressing the challenge of aligning AI with human moral values?

A4: Anthropic and OpenAI are addressing the challenge of aligning AI with human moral values by engaging with religious leaders from various faiths to gain insights and guidance on ethical AI development. This approach goes beyond technical solutions, emphasizing the integration of moral expertise in shaping the ethical framework for AI models.

Q5: What are some of the challenges faced by OpenAI regarding AI safety and ethical concerns?

A5: OpenAI has faced challenges related to AI safety and ethical concerns, including multiple lawsuits for alleged copyright infringement and internal dissent over the deprioritization of safety goals. These issues have led to significant changes within the organization, including the restructuring of its leadership and a shift in focus to address these ethical challenges more effectively.

Q6: What role does the Interfaith Alliance for Safer Communities play in the development of ethical AI?

A6: The Interfaith Alliance for Safer Communities plays a pivotal role in the development of ethical AI by organizing events like the 'Faith-AI Covenant' roundtable. This Geneva-based organization facilitates dialogue between tech companies and religious leaders to ensure that AI development is aligned with moral and ethical standards, thereby promoting safer AI practices.

Q7: What future initiatives are planned following the 'Faith-AI Covenant' roundtable?

A7: Following the 'Faith-AI Covenant' roundtable, future initiatives include organizing similar events in cities such as Beijing, Nairobi, and Abu Dhabi. These initiatives aim to continue the dialogue on ethical AI development with a broader range of religious and cultural perspectives, fostering a global approach to integrating moral values in AI technologies.