Summary

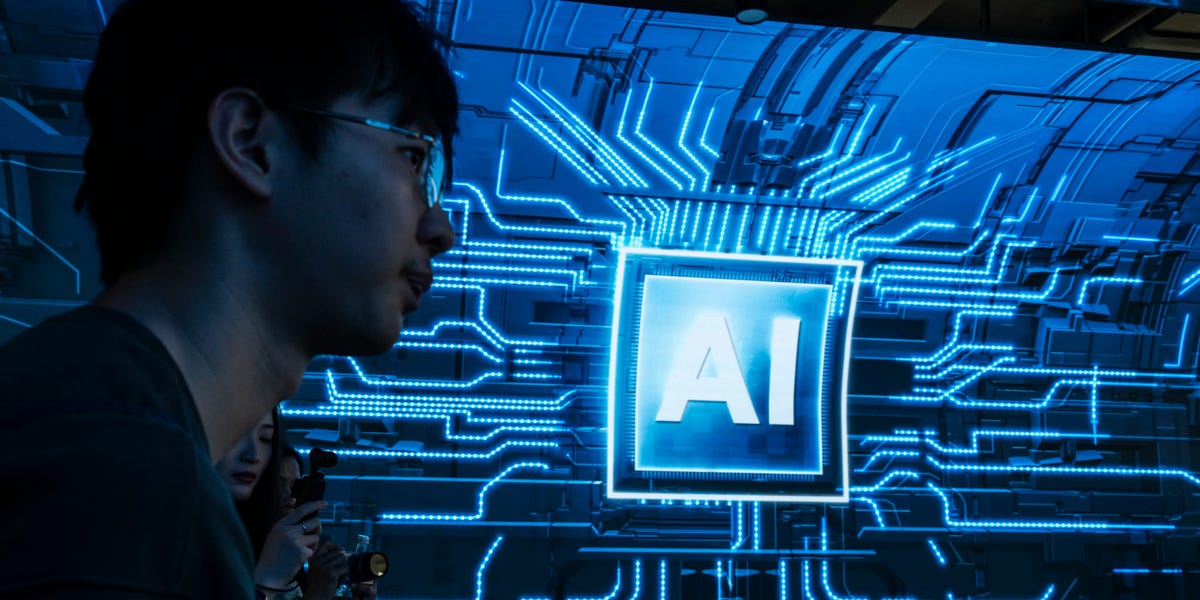

Business Insider reports that major AI labs are recruiting philosophy majors into roles that shape how models behave, with some positions advertised at six-figure salary levels. The article quotes future-of-work expert Ravin Jesuthasan: This is definitely a growing trend, and cites critics who say…

Source: Lets Data Science

AI News Q&A (Free Content)

Q1: What are some key ethical considerations in the development and deployment of AI technologies?

A1: The ethics of artificial intelligence encompass numerous topics with significant ethical stakes. These include algorithmic biases, fairness, accountability, transparency, privacy, and regulation, especially in contexts where AI influences or automates human decision-making. Other emerging challenges include machine ethics, AI safety and alignment, and the potential for AI-enabled misinformation. The implications of AI ethics are particularly critical in sectors such as healthcare, education, criminal justice, and the military.

Q2: Why are AI labs increasingly recruiting philosophy majors for ethics roles?

A2: AI labs are recruiting philosophy majors to address the complex ethical issues associated with AI technologies. Philosophy majors bring strengths in ethical reasoning, analytical rigor, and holistic thinking, which are crucial for shaping AI models' behaviors and values. This trend highlights the importance of incorporating humanistic perspectives into the development of AI to ensure responsible and ethical outcomes.

Q3: What are the proposed strategies for ethical AI use in scientific research mentioned in recent scholarly articles?

A3: Recent scholarly articles propose several strategies for ethical AI use in scientific research. These include understanding model training and output to mitigate biases, respecting privacy and confidentiality, avoiding plagiarism, applying AI beneficially compared to alternatives, and ensuring transparency and reproducibility. These strategies aim to bridge the gap between abstract ethical principles and practical implementation in research practices.

Q4: How does OpenAI's approach to AI ethics differ from other AI organizations?

A4: OpenAI's approach to AI ethics is characterized by a focus on safety and risk management, often without applying broader academic or advocacy ethics frameworks. Unlike some organizations that hire philosophers to tackle ethical questions, OpenAI treats safety largely as an engineering challenge. This approach may lead to criticisms of 'ethics-washing' where ethical considerations are superficially addressed without substantive engagement.

Q5: What are some of the emerging ethical issues related to AI that scholars are currently exploring?

A5: Scholars are exploring a range of emerging ethical issues related to AI, such as the impact of AI on privacy, the potential for AI to perpetuate or exacerbate biases, and the ethical implications of AI in decision-making processes. Additionally, there is a focus on the societal impact of AI, including technological unemployment and the ethical treatment of AI systems if they attain a form of moral status.

Q6: How are institutions like the Alan Turing Institute addressing ethical challenges in AI?

A6: Institutions like the Alan Turing Institute are addressing ethical challenges in AI by hiring philosophy majors to guide public policy on AI ethics and safety. These roles involve helping government and organizations navigate complex ethical issues in AI policymaking, ensuring that ethical considerations are integrated into the development and deployment of AI technologies.

Q7: What role do philosophy majors play in shaping AI technologies at companies like Google DeepMind and Anthropic?

A7: At companies like Google DeepMind and Anthropic, philosophy majors play a crucial role in addressing ethical and societal questions posed by advanced AI technologies. These companies are recruiting philosophers to ensure that ethical considerations are integrated into AI development processes, highlighting the importance of interdisciplinary approaches in technology development.