Summary

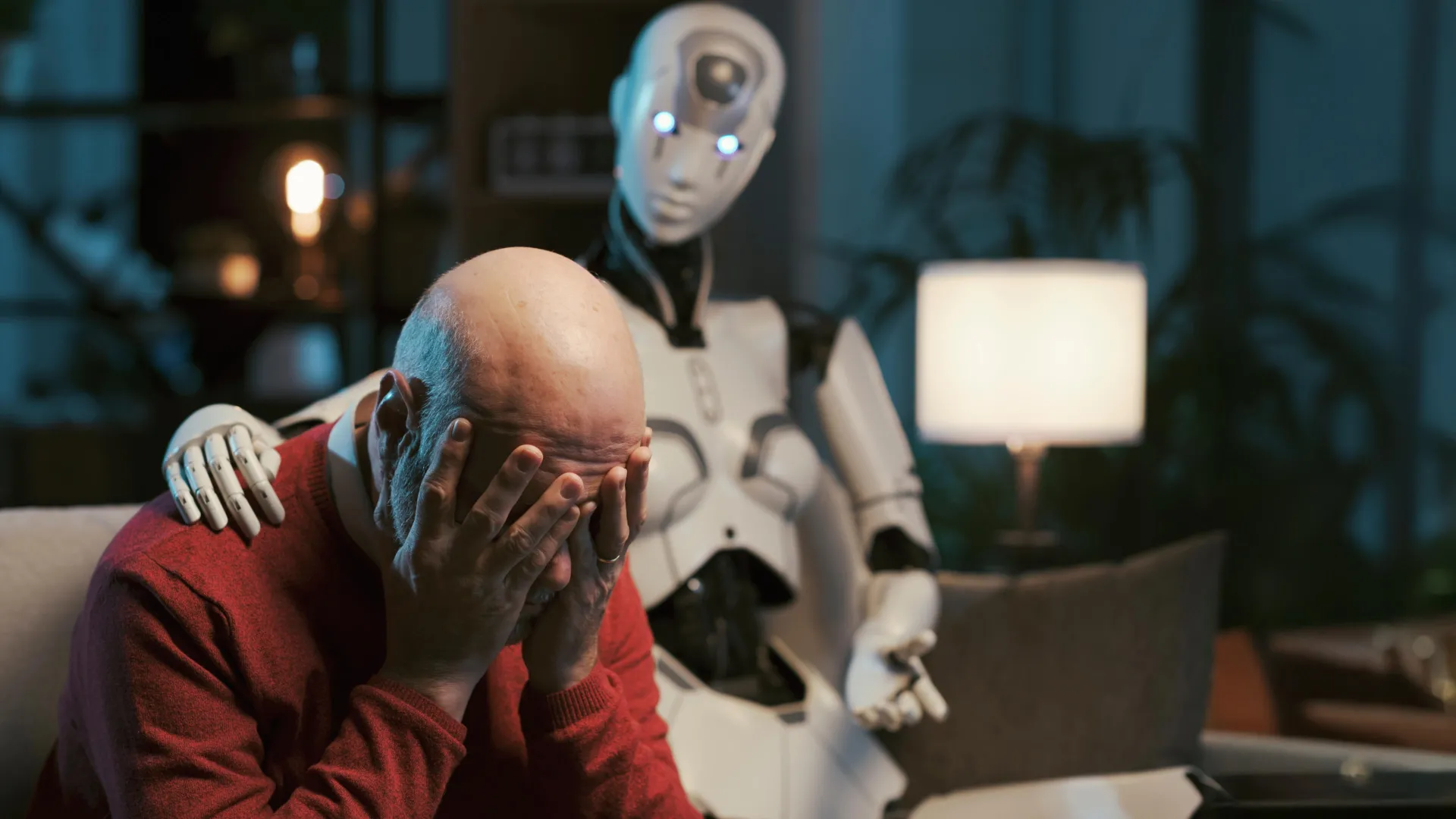

As more people seek mental health advice from ChatGPT and other large language models (LLMs), new research suggests these AI chatbots may not be ready for that role. The study found that even when instructed to use established psychotherapy approaches, the systems consistently fail to meet professio…

Source: ScienceDaily

AI News Q&A (Free Content)

Q1: What are some of the ethical concerns raised by using ChatGPT as a mental health therapist?

A1: Using ChatGPT as a mental health therapist raises several ethical concerns, including the risk of mishandling crisis situations, providing misleading responses, and creating a false sense of empathy. The lack of established regulatory frameworks for AI counselors also poses a challenge, as they are not held accountable in the same way as human therapists. This can result in violations of ethical standards and potential harm to users.

Q2: How do AI chatbots like ChatGPT handle crisis situations in mental health therapy, and what are the potential risks?

A2: AI chatbots like ChatGPT often struggle with handling crisis situations in mental health therapy. They may provide generic responses that fail to address the specifics of a user's crisis, potentially reinforcing harmful beliefs or failing to refer users to appropriate resources. This can lead to significant psychological risks and underscores the need for human oversight in AI-assisted therapy.

Q3: What are the regulatory challenges associated with using AI like ChatGPT in mental health care?

A3: The regulatory challenges of using AI like ChatGPT in mental health care include the absence of clear rules for assigning liability in cases of harm, potential breaches of patient privacy, and the lack of comprehensive regulatory frameworks to ensure ethical compliance. These challenges highlight the need for collaboration among government, healthcare departments, and AI companies to establish appropriate legal and ethical boundaries.

Q4: What potential benefits and risks does ChatGPT present in the context of mental health therapy according to recent research?

A4: ChatGPT presents potential benefits in mental health therapy, such as increasing access to care and reducing barriers related to cost and availability. However, risks include the possibility of inaccurate information, lack of emotional depth, and ethical concerns related to privacy and bias. Recent research emphasizes the need for careful implementation and regulation to balance these benefits and risks.

Q5: How does the lack of human elements in AI therapy affect the therapeutic process according to recent studies?

A5: Recent studies indicate that the lack of human elements in AI therapy, such as empathy and reflective ability, can affect the therapeutic process by undermining trust and the therapeutic relationship. AI chatbots may fail to provide the emotional support and nuanced understanding that human therapists offer, leading to concerns about their effectiveness in delivering mental health treatments.

Q6: What are the implications of using AI chatbots for mental health support on the patient-therapist relationship?

A6: Using AI chatbots for mental health support may disrupt the traditional patient-therapist relationship by replacing human interaction with automated responses. This can impact the dynamics of trust, compassion, and communication essential for effective therapy. Additionally, overreliance on AI may erode the perceived value of humanistic care and professional expertise in mental health treatment.

Q7: What are the key recommendations for improving the ethical use of AI in mental health therapy?

A7: Key recommendations for improving the ethical use of AI in mental health therapy include establishing regulatory frameworks to ensure accountability, enhancing transparency and disclosure of AI-generated content, and implementing regular monitoring of AI systems. Additionally, integrating human oversight and improving cultural competence and safety standards are crucial for minimizing potential harm and maximizing the therapeutic benefits of AI.

References:

- ScienceDaily - ChatGPT as a therapist? New study reveals serious ethical risks

- PMC - ChatGPT in health care: Ethical challenges

- Nature - Ethical concerns and risks of AI in therapy

- PMC - Ethical implications of AI in psychotherapy

- APA Services - AI chatbots as therapists: Regulatory concerns

- Psychiatry - AI Counselors Cross Ethical Lines

- , "Brown University - AI mental health ethics study